Back in February, I made a very popular post which compared several implementations of the Ruby language. More than 9 months later, I’m back with a brand new shootout. Fasten your seat belts, it’s going to be fun.

A bit of history

When I first published a comparison between the various virtual machines and interpreters for Ruby, many of these projects were in their infancy. With the main Ruby interpreter (aka MRI) considered to be fairly slow and with many new implementations that were released in an attempt to address this issue, there was real reason within the community to be genuinely curious about how all these projects measured up. Yarv (incorporated in Ruby 1.9) was the fastest by a long shot, but almost all projects showed a bright and promising future. Things were moving fast with these young and exciting projects, so much so that I received all sorts of requests to run a new benchmark within a month of the original being published. Testing all these virtual machines is a lot of work at night, but I was willing to give it another go. Unfortunately, the machine that I use for running the tests decided to die. It was supposed to be fixed under warranty, but after wasting a lot of my time (I’ll spare you the story), I ended up fixing it myself. After that, things got rather busy and the shootout was put on hold for far too long. The downside of this is that many people come from Google looking for the benchmark results and find that, for example, Rubinius wasn’t that fast and they may in turn write it off because of this. This isn’t really fair, since those results are outdated.

February Results

With Ruby 1.9 coming out for Christmas, now is the time to show to the community new numbers, and do a reality check to see where are we with these projects. Take a good look at the old numbers in the figure above, you’ll be pleasantly surprised by the new ones listed below.

Disclaimer

As for the previous shootout, before proceeding I feel obliged to remind you that:

- Don’t read too much into this and don’t draw any final conclusions. Each of these exciting projects has its own reason for being, as well as different pros and cons, which are not considered in this post. They each have a different level of stability and completeness. Furthermore, not all of them have received the same level of optimization yet (e.g. Yarv is optimized so that it shines in these tests). Take this post for what it is: an interesting and fun comparison of Ruby implementations;

- The results may entirely change in a matter of months. There will be other shootouts in this blog. If you wish, grab the feed;

- The scope of the benchmarks is limited because they can’t stress every single feature of each implementation. They’re just a sensible set of benchmarks which give us a general idea of where we are in terms of speed, though really they aren’t capable of being overly accurate when it comes to predicting real world performance;

- These tests were run on my machine, your mileage may vary;

- In this article, I sometimes blur the distinction between virtual machine and interpreter by simply calling them “virtual machines” for the sake of simplicity.

Implementations and Testing Environments

I decided to test only promising and active projects with lively communities around them. IronRuby and Cardinal were not included in my testing. IronRuby is in pre-alpha mode, so it will be included at some stage, but it’s not here now.

On Linux (Ubuntu 7.10 x86), I tested the following:

- Ruby 1.8.6 (2007-09-24 patchlevel 111)

- Ruby 1.9.0 (from trunk, 12/02/2007)

- Rubinius 0.8.0 (from trunk, 12/02/2007)

- JRuby (from trunk, 12/02/2007)

- XRuby 0.3.2

Both JRuby and XRuby were run on JDK 6 with 4MB of stack size, and I found that the optional -J-server for JRuby significantly improved performances across the board. None of the tests took startup times into consideration. Rubinius was tested by running the already compiled .rbc files.

On Windows XP SP2, I tested Ruby.NET 0.9 on the .NET Framework 2.0 and then Ruby 1.8.6 (p-111) for comparison. I tested Ruby.NET 0.9 with Mono on Linux, but I mostly got timeouts or errors, and since version 0.9 is not officially supporting Mono yet, I decided not to include it.

My Linux tests were conducted on an AMD Athlon™ 64 3500+ processor, with 1 GB of RAM (the same machine used for the previous benchmarks). The Windows tests were run on a Intel Core Duo L2400 1.66GHz with 2 GB of RAM. Don’t try to compare the results between Linux and Windows, as I employed different hardware for them.

Tests

I used the same 41 tests previously used to benchmark the various Ruby implementations. These can be found within the benchmark folder in the repository of Ruby 1.9. The following is a list of tests with a direct link to the source code for each of them:

- bm_app_answer.rb

- bm_app_factorial.rb

- bm_app_fib.rb

- bm_app_mandelbrot.rb

- bm_app_pentomino.rb

- bm_app_raise.rb

- bm_app_strconcat.rb

- bm_app_tak.rb

- bm_app_tarai.rb

- bm_loop_times.rb

- bm_loop_whileloop.rb

- bm_loop_whileloop2.rb

- bm_so_ackermann.rb

- bm_so_array.rb

- bm_so_concatenate.rb

- bm_so_count_words.rb

- bm_so_exception.rb

- bm_so_lists.rb

- bm_so_matrix.rb

- bm_so_nested_loop.rb

- bm_so_object.rb

- bm_so_random.rb

- bm_so_sieve.rb

- bm_vm1_block.rb

- bm_vm1_const.rb

- bm_vm1_ensure.rb

- bm_vm1_length.rb

- bm_vm1_rescue.rb

- bm_vm1_simplereturn.rb

- bm_vm1_swap.rb

- bm_vm2_array.rb

- bm_vm2_method.rb

- bm_vm2_poly_method.rb

- bm_vm2_poly_method_ov.rb

- bm_vm2_proc.rb

- bm_vm2_regexp.rb

- bm_vm2_send.rb

- bm_vm2_super.rb

- bm_vm2_unif1.rb

- bm_vm2_zsuper.rb

- bm_vm3_thread_create_join.rb

On top of these, I also ran a simplified bm_app_factorial called bm_easier_fact in which n=4000 in order to verify the speed for implementations which had trouble with n=5000. Ed Borasky’s matrix benchmark is an example of a more engaging script and therefore was also included (with n=50).

The results

Without further ado, let’s take a look at the results. The following table shows the execution time expressed in seconds, on Linux:

Green, bold values indicate that the given virtual machine was faster than Ruby 1.8.6 (our baseline) and a yellow background indicates the absolute fastest implementation for a given test. Timeout indicates that the script didn’t terminate in a reasonable amount of time and had to be interrupted. The values reported at the bottom are the total amounts of time (in seconds) that it took to run the common set of benchmarks which were successfully executed by every virtual machine. In other words, the set of tests that were passed by each one on Linux.

Here are the ratios of the Ruby implementations based on the main Ruby interpreter:

The baseline (Ruby 1.8.6) time is divided by the given time to obtain a number that tells us “how many times faster” a given implementation is. 2.0 means twice as fast, while 0.5 means half the speed (so twice as slow). The geometric mean at the bottom of the table tells us “on average” how much faster or slower a virtual machine was when compared with the main Ruby interpreter. Just as with the totals above, only the tests which were successfully run by each one where included into the calculation. Since Ruby 1.8.6 raised an error due to the stack being too deep, I used the value from Ruby 1.8.5 as a baseline for that one app_factorial test.

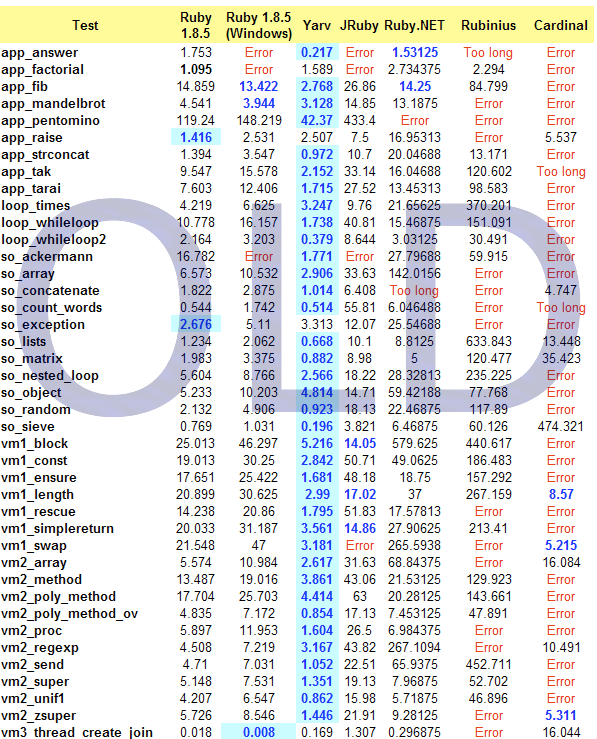

For those who are curious about Ruby.NET 0.9 on Windows, here are the results:

Observations

You have the numbers, so feel free to make up your mind about them and comment freely below. That said I’ll briefly share a few considerations of my own with you.

First: WOW! Where do I start?

Ruby 1.9

Ruby 1.9 confirms itself as a fast implementation, about three times the speed of 1.8.6 which is going to replace it in 3 weeks. Ruby 1.9 (Yarv) is still the fastest of the lot in most tests, but others are clearly catching up. We have been waiting for a long time for this version of Ruby and it’s sure not to disappoint. A great Christmas present for Ruby lovers.

JRuby

JRuby is truly impressive, being the only implementation that’s able to pass all the tests without raising errors or timing out. Not only that, but in my previous benchmark, JRuby was several times slower than 1.8.5, whereas now it’s faster than the main Ruby interpreter. There have been huge improvements going on over the last few months. Really, just compare the times in the old table with the ones in the new table: unbelievable progress. JRuby is the only one, aside from 1.8.6, which is able to run Rails in production. I wouldn’t be surprised if JRuby turned out to be, on average, just as fast as Ruby 1.9, in a year or so.

XRuby

Where the heck did this come from? You may not have heard about this project, but we better start paying attention. XRuby is the youngest by far, and while still an “immature” implementation, it happens to be damn impressive from a performance standpoint. Its speed is comparable to that of JRuby (they are both Java based) and was the fastest of the lot in 4 tests. Hats off to Xue Yong Zhi. We’ll watch this one closely.

Rubinius

Looking at the old table and then at the new one, it’s hard to believe that the same implementation could improve so much in such a short period of time. In 16 tests it actually managed to be faster than Ruby 1.8.6. How fast will Rubinius be in a year? I personally consider Rubinius to be one of the most promising Ruby implementations out there.

Ruby.NET

As mentioned above, Ruby.NET with Mono on Linux was unusable. However, on Windows, performances were decent and improved since last time (even though the hardware for Windows was different so we can’t scientifically compare them). .NET and Mono are not inherently slow, therefore with the right amount of optimization, I feel that we can improve those numbers. As I said before, let’s give Ruby.NET some love.

All in all, these are impressive numbers and improvements. We all know that Ruby is not going to be a particularly fast language, that’s not its aim, but for a language whose Achilles’ heel is being slow, every little bit helps to make it more usable in the real world. And it’s not only a matter of performance. Alternative implementations carry many other advantages, like the possibility of integrating Ruby within an existing Java or .NET infrastructure. My public congratulations to all of those who worked hard to give us these viable options.

Now go out there and spread the word about these results. Let’s show to the world what the Ruby community has been up to in terms of seriously resolving one of the biggest issues that still concerns this otherwise great programming language.

Update

Please find here the results of my benchmarks in Excel and PDF format.

Update 2

Now available in Italian, too.

Get more stuff like this

Subscribe to my mailing list to receive similar updates about programming.

Thank you for subscribing. Please check your email to confirm your subscription.

Something went wrong.

You may want to try out mingw build for windows, as it’s faster. (doachristianturndaily.info/ruby_distro)

Hello,

Which part of Ruby.NET was unusable on Mono/Linux?

Also, have you considered testing with IronRuby on Mono/Linux?

Miguel

Hi Miguel,

thanks for stopping by. 🙂

16 out of the 43 tests resulted in Errors/Timeouts. And the majority of the remaining ones were significantly slower than the rest of the group. It is most likely that during the next test run I’ll include both Ruby.NET and IronRuby on Mono.

For performance there are other considerations needed, besides pure speed:

– type erasure

– partial application

– the ability to create native code

Off course dynamic languages have their place!

But some of the optimizations associated with ‘static’ languages do not have to be that exclusive.

Esspecially type erasure can give you a performance benefit that simply has no way of working ‘around’.

Although in Ruby types can be inferred without hitting the halting-problem, we can at least attempt to infer the types for any block _before_ executing it.

That’s the kind of stuff that could really put Ruby is a completely different leagure performance-wise. Even the current JVM’s do not do type-erasure.

How do their memory usages compare? Ruby 1.9 just sounds too good to be true! There has to be a catch, right?

I am really impressed with JRuby’s results. I tested an old version – before the compiler – and the performance was terrible! Time to get to it again!

Thanks for sharing!

Thanks for writing this — I was complaining earlier today that there were no good benchmarks for all the latest implementations. Thanks for reading my mind!

Ruby 1.9 is the best implementation for now. it’s great. Ruby 1.9 will be available in short time.

btw: XRuby is not the youngest implementation, it started from 2005, but published to GoogleCode in 2006, here is a post written by the author of XRuby (in Chinese):

http://www.ruby-lang.org.cn/forums/thread-2278-1-1.html

you may need Google Translation. 🙂

Hi there. Very useful information. It’s nice to see these kind of posts as opposed to “Ruby smokes xyz” which are getting really, really boring.

As a Ruby enthusiast, I am very excited about 1.9 and am interested about how 1.9 stands against its predecessors, not against other languages…anyway, one little question why is JRuby is the only VM that can run a Rails app in production? Does that mean 1.9 cannot? Why?

Cheers!

Carlos: Ruby 1.9 makes many incompatible changes to Ruby APIs and syntax. Many of these changes were specifically to improve performance at the cost of functionality.

It will probably be some time before all libraries and apps needed to run Rails are migrated over to the 1.9 features.

Very interesting post that could be getting even more interesting if we make some code changes here and there. For example:

bm_app_factorial.rb:

ruby -e ‘class Integer; def fact; f=1; (2..self).each { |i| f *= i }; f; end; end; 8.times{5000.fact}’

(No real need for recursiveness here.)

bm_so_sieve.rb:

Sieve of Eratosthenes in Ruby

http://snippets.dzone.com/posts/show/3734

Ruby 1.9 seems like a very big improvement. One thing makes me wonder though – its’ performance in vm3_thread_create_join test, where it shows only 14% of the performance of the reference 1.8.6 version. How is that possible given that 1.9 leaves 1.8.6 in the dust in every other test performed?

gemRuby ftw!

😛

A curious thing about JRuby is extremely slow startup (Java…sigh) and two levels of JIT compilation.

In application-level benchmarks, this means that performance keeps changing (getting better) for the first 30-50 seconds of a test run. And the difference between the first thousand iterations and the last thousand s on the order of 1.5-2 times.

It may not make that much difference in low-level benchmarks (because iterations are that much shorter and JIT should be kicking in earlier). I still wonder: are you simply doing “time foo.rb”, or do you run those scripts with some warmup period?

Don:

> its’ performance in vm3_thread_create_join test,

> where it shows only 14% of the performance of the reference 1.8.6 version

Green threads are much cheaper to create than native ones. Which is almost the only advantage of green threads, and is relatively easy to work around, with thread pools.

Don,

It seems that Ruby 1.9 now uses system (kernel) threads instead of the green-threads model of 1.8.6. This is also why JRuby takes nigh an hour to install the Rails gem and it’s dependencies. Kernel threads are extremely slow to initialize and use than green threads.

Can’t wait for Ruby 1.9!

If what Charles says above is true (“Ruby 1.9 makes many incompatible changes to Ruby APIs and syntax. Many of these changes were specifically to improve performance at the cost of functionality.”) then it seems a bit unfair to compare the other implementations. Surely they can (and hopefully will) make the same changes and improve their performance at the same time when the libs have been ported and/or on a separate, parallel branch.

At least I think this (if my understanding is correct) should be mentioned in the shootout.

Jason: Rails takes a while to install largely because of rdoc generation, which is hitting an as-yet-undiscovered bottleneck in JRuby. It’s not related to green threads versus native threads, and in fact I don’t think a single thread is spawned during rdoc generation. And it’s nowhere near an hour.

dave: We can and will make the same optimizations…but for now, they’re on the back burner. Almost everyone in the world is going to be running Ruby 1.8 for some time, and at the moment we’re the fastest Ruby 1.8-compatible implementation around. Not bad.

@niko: I believe the tests are purposefully done in the way they are – the factorial function tests the stack speed and general recursive function speed of each interpreter. Not sure exactly what the goal is on sieve, so I might be wrong there.

Anyway, great tests!

Just wondering if you’d like to do an updated benchmark again, now that we’re in 2010! Rubinius has indeed improved immensely as per your predictions, and I’m curious to see how it (and others) perform under some carefully-assembled tests. Thanks for the benchmarks so far!